Evaluate your agent’s performance

Run evaluations on curated datasets during development to compare agent versions, benchmark performance, and catch regressions before users do.

Monitor performance in production with online evals that score user interactions with your agent in real-time to detect issues and measure quality.

- Calibrate llm-as-judge evals with human feedback

- Conversation evals

- Multi-modal evals

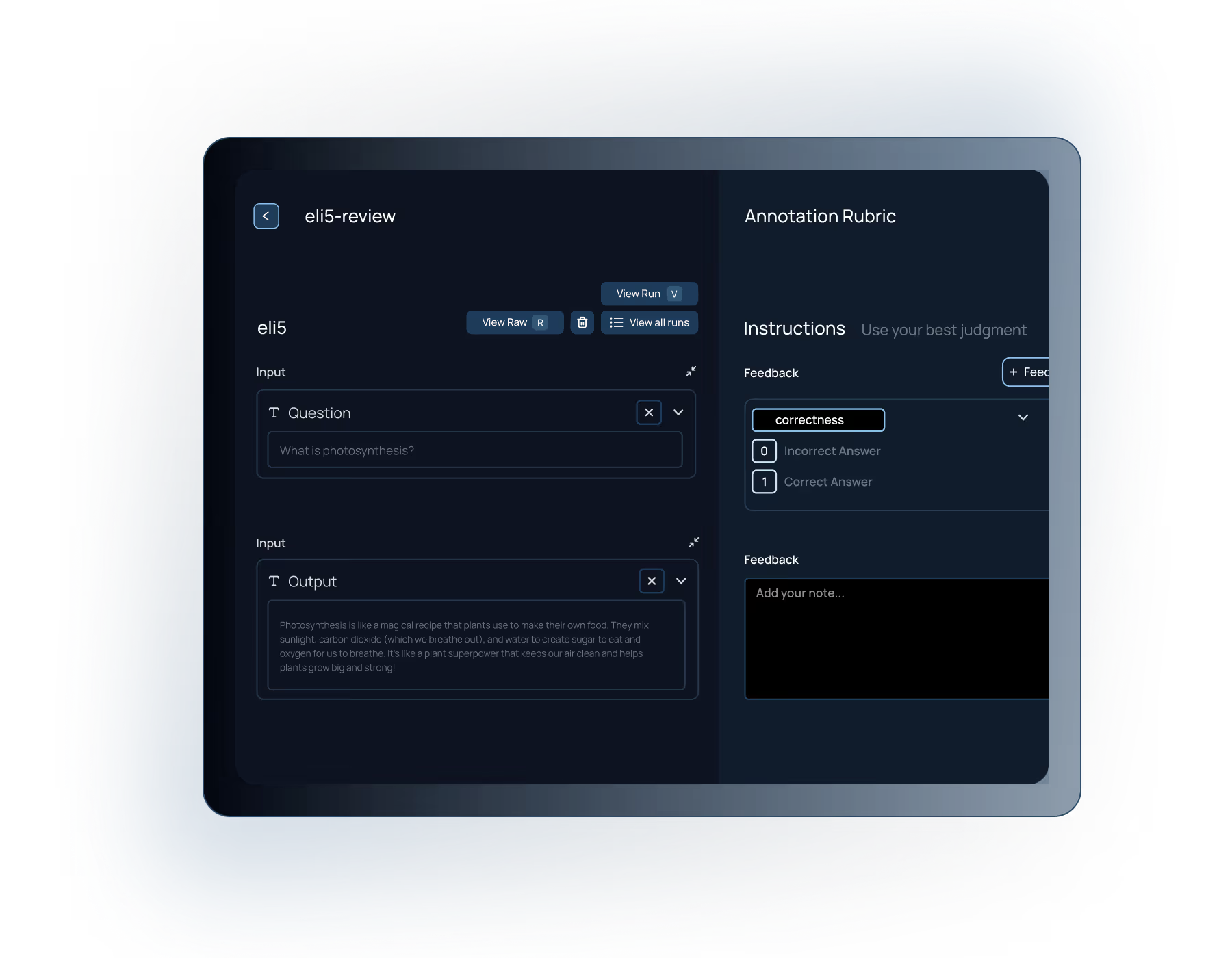

Gather expert feedback

Equip subject-matter experts to assess response quality or review specific attributes of your agent. Automatically assign runs for review, and annotate any part of your agent workflow to capture precise feedback.

- Embedded renderings of UI in review flow

- Auto send interesting traces for human review

- Shared scoring criteria to standardize reviewer feedback

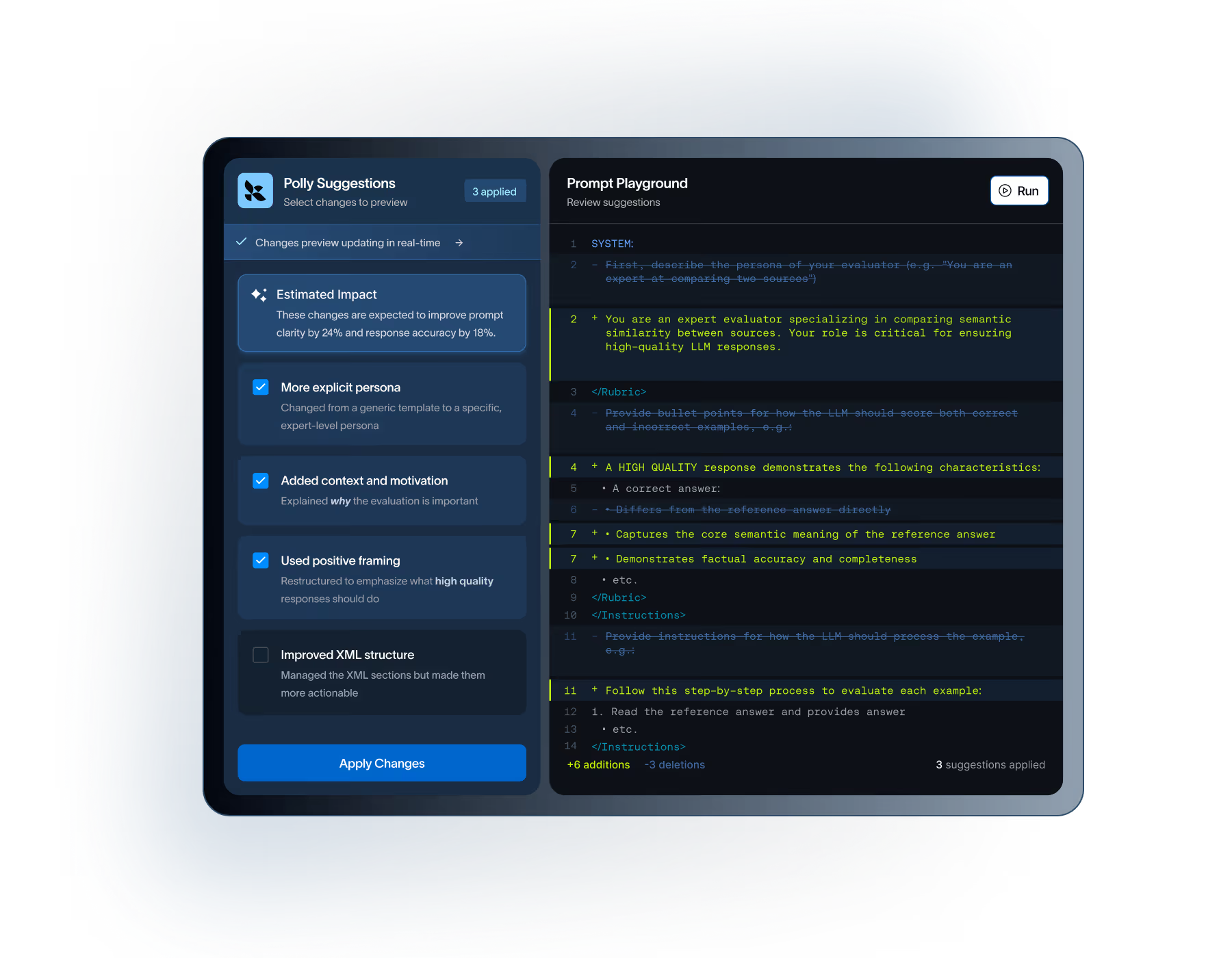

Iterate & collaborate on prompts

Experiment with prompts in the Playground, and compare outputs across different prompt versions or model providers. Use our AI Agent Polly to auto improve prompts. Scale spot checking by running an evaluation of the prompt on a larger dataset, all from the UI.

Resources for LangSmith Evaluation

FAQs for LangSmith Evaluation

LangSmith's evaluation framework supports multiple evaluator types: human evaluation through annotation queues, heuristic checks (like validating outputs or checking if code compiles), LLM-as-judge evaluators that score against criteria you define, and pairwise comparisons. You can also write custom evaluators in Python or TypeScript with any business logic you need, from correctness and ground truth matching to hallucination detection and guardrails validation.

LangSmith makes it easy for AI teams to collect expert feedback through annotation queues. Flag runs for review, assign them to subject-matter experts, and use that feedback to calibrate automated evaluation, improve prompts, or augment datasets with high-quality test cases.

LLM-as-judge evaluators don't always get it right. LangSmith lets you route samples to human reviewers who flag disagreements, helping you identify failure modes and edge cases. This feedback loop lets you iterate on and calibrate your automated evaluation metrics over time.

Offline evaluation runs against curated datasets during development to catch regressions before deployment. They act as unit tests for your LLM application. Online evaluation scores real-world production traffic in real-time to detect quality drift. LangSmith supports both as part of an end-to-end evaluation lifecycle.

Yes. You can use LangSmith Evaluation with or without Observability. For all plan types, you'll get access to both and only pay for what you use.

Agent evaluation in LangSmith captures the full trajectory of steps, tool calls, and reasoning your agent took. Define evaluators that score intermediate decisions and agent behavior to debug complex agent workflows and pinpoint where things went wrong.

Yes. LangSmith integrates with pytest, Vitest, and GitHub workflows so you can run evals on every PR or nightly build. Set thresholds on evaluation metrics and fail pipelines automatically when scores drop, bringing the same rigor as deterministic unit tests to your AI development process.

LangSmith's comparison view dashboards show results side-by-side across experiments. Run the same dataset against different prompt versions, model providers, or agent systems to visualize what's working and optimize performance with real benchmarks.

RAG evaluation separates retrieval quality from generation quality. LangSmith supports metrics like context precision (did you retrieve relevant documents?) and faithfulness (does the answer match the retrieved context?) helping you catch hallucinations and improve your retrieval pipelines independently.

No. LangSmith is framework-agnostic. Evaluate AI applications built with LangGraph, custom Python, or any other framework. Use the SDK or API to send traces from whatever stack your team runs.

Start by capturing production traces with LangSmith, then sample interesting or problematic runs into a dataset. Use LLM-as-judge evaluators to bootstrap initial labels, then refine with human annotation.

No. The LangSmith SDK uses an async callback handler that sends traces to a distributed collector. Your application performance is never impacted. If LangSmith experiences an incident, your agent keeps running normally.

LangSmith instances hosted at smith.langchain.com stores data in GCP us-central-1 or europe-west4. For enterprise-grade requirements, LangSmith can run on your Kubernetes cluster in AWS, GCP, or Azure so its fully self-hosted and data never leaves your environment. We will not train on your data. See our documentation for details.

We will not train on your data, and you own all rights to your data. See LangSmith Terms of Service for more information.

LangSmith has a free tier for development and small-scale production. Paid plans scale with trace volume. See our pricing page for details, or contact us for enterprise pricing.

Ready to build better agents through continuous evaluation?