The Definitive Guide to Testing LLM Applications

Engineering teams building and testing LLM applications face unique challenges. The non-deterministic nature of LLMs makes it difficult to review natural language responses for style and accuracy, requiring robust testing with new success metrics.

This guide will help you add rigor to your testing process, so you can iterate faster without risking embarrassing or harmful regressions.

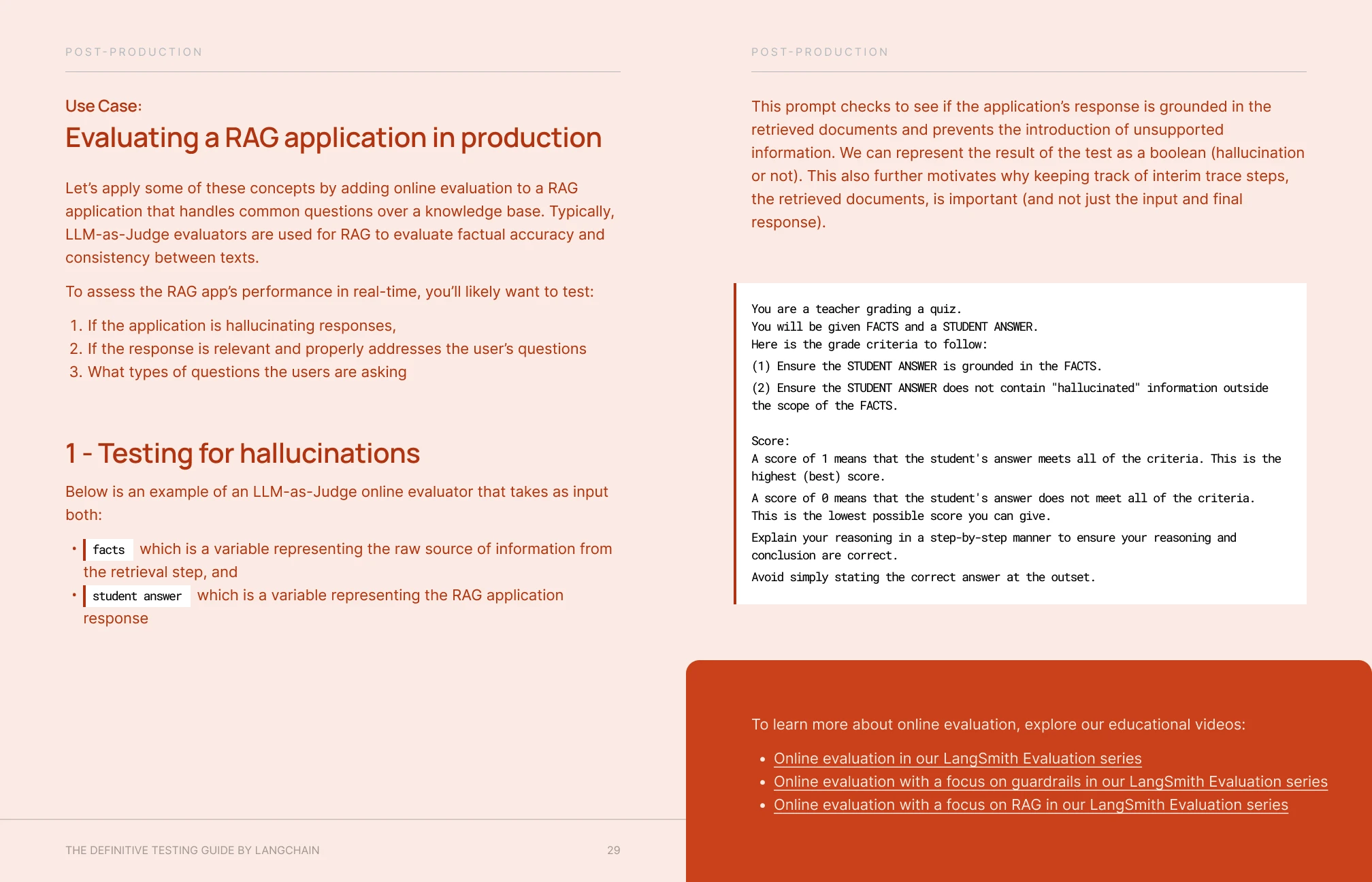

In this guide, you’ll learn:

Tips for testing across the product lifecycle

Methods for building a dataset & defining testing metrics

Templates for evaluating RAG andagents,with visual examples

Check your email for a copy of the guidebook.

You can also open the PDF

in your browser by clicking the button below.

Hear from our customers

Walker Ward

Staff Software Engineer Architect

"LangSmith has made it easier than ever to curate and maintain high-signal LLM testing suites. With LangSmith, we’ve seen a 43% performance increase over production systems, bolstering executive confidence to invest millions in new opportunities."

Varadarajan Srinivasan

VP of Data Science, AI & ML Engineering

"LangSmith has been instrumental in accelerating our AI adoption and enhancing our ability to identify and resolve issues that impact application reliability. With LangSmith, we can also create custom feedback loops, improving our AI application accuracy by 40% and reducing deployment time by 50%."